How To Check To See If Blocked Pages Are Indexed

You put a robots.txt on your site expecting it to keep Google out of certain pages. But you worry – did you do it correctly? Is Google following it? Is the index as tight as it could be?

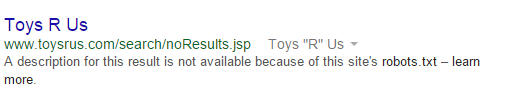

Here’s a question for you. If you have a page blocked by robots.txt, will Google put it in the index? If you answered no, you’re incorrect. Google will indeed index a page blocked by robots.txt if it’s being linked by one of your pages (that do not have a rel=”nofollow”), or if it’s linked from another website. It doesn’t usually rank well because Google can’t see what’s on the page, but it does get PageRank passed through it. What a waste! Google will probably give you a snippet like this:

2 Ways To Check For Indexed Pages You Thought Were Blocked

Don’t worry, I have a couple relatively painless ways to check your own indexation.

Using Google.com (More Manual)

Visit your robots.txt file and look at the blocked directories. Let’s use Toys R Us for example:

- Check https://www.toysrus.com/robots.txt

- Take the first blocked directory (as of 3/22/2015, it’s /search/)

- Query Google.com using site:toysrus.com inurl:/search/ (this will attempt to find any URL that has /search/ on the toysrus.com site)

- Take note of any listings stating a description for this result is not available because of this site’s robots.txt

- Repeat with all the other blocked directories

- Find all linking pages and determine your best course of action (eg, a nofollow attribute, a meta-noindex, the “remove URL” from Google Webmaster Tools)

In some cases, this trick will result in noisy results. If you tried this example above, you probably didn’t find blocked URLs until about page 5, where you see “In order to show you the most relevant results, we have omitted some entries very similar to the 47 already displayed. If you like, you can repeat the search with the omitted results included.” Since Toys R Us uses “search” as a parameter, Google tries to show it. This is not the “search” we’re looking for.

Summary

SERPitude is certainly a great tool for purposes as well, but solid for understanding what the SERPs are showing your searchers. Now that you identified your blocked pages, the real fun comes in tracking them down, deindexing them, and plugging the links with a rel=”noindex”. Go to it, Sherlock!